Content Moderation Service

Our Content Moderation Solutions

We offer end-to-end content review services for diverse industries including e-commerce, social networking, online learning, travel, gaming, dating apps, and more.

Text Moderation

- Detecting profanity, hate speech, spam, and offensive language.

- Filtering misleading information and inappropriate user comments.

- NLP-driven automated text analysis for speed and accuracy.

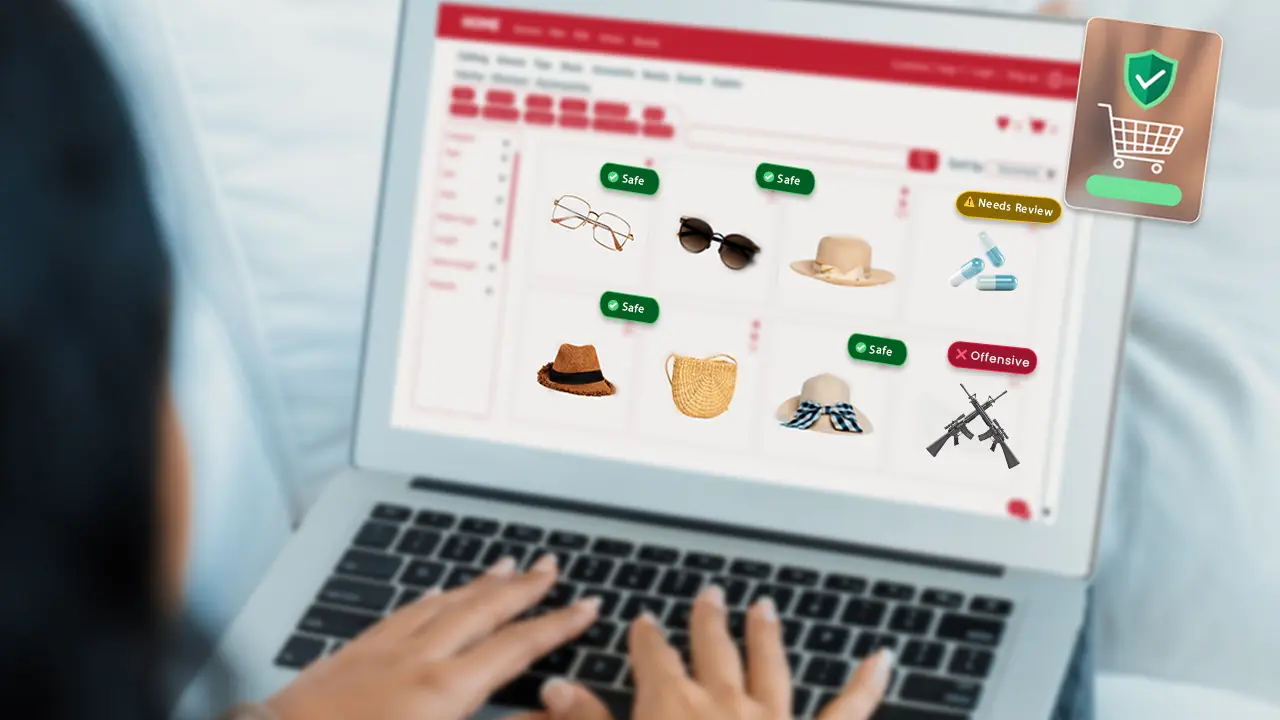

Image Moderation

- Identifying explicit, violent, or harmful imagery.

- AI-based object detection for inappropriate visuals.

- Contextual analysis to reduce false positives.

Video Moderation

- Reviewing live streams and pre-recorded videos.

- Detecting unsafe content, copyrighted material, or policy violations.

- Automated video scanning paired with human moderation for accuracy.

Social Media Moderation

- Monitoring user-generated posts, hashtags, and comments.

- Protecting communities from bullying, harassment, and misinformation.

- Ensuring alignment with platform guidelines.

Chat & Forum Moderation

- Filtering toxic chats in real-time for gaming and community platforms.

- Blocking harassment, spam, and harmful discussions.

- Enabling safe and positive user engagement.

Marketplace & E-Commerce Moderation

- Reviewing product listings for compliance.

- Detecting counterfeit or prohibited items.

- Ensuring authenticity and trust in customer reviews.

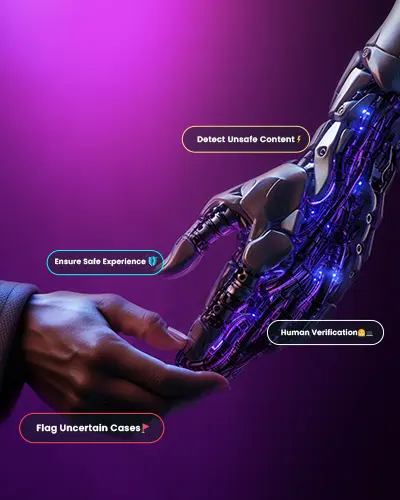

AI-Powered Content Moderation with Human Expertise

At Vaidik AI We combine AI-driven content moderation tools with skilled human moderators for the best results. While AI ensures speed and scalability, human intelligence provides contextual understanding that machines cannot fully achieve.

This hybrid model helps businesses:

- Scale moderation for millions of UGC posts daily.

- Reduce operational costs while maintaining high accuracy.

- Customize moderation filters based on brand-specific needs.

Why Choose Vaidik AI For Content Moderation Services?

- Proven expertise in large-scale content moderation projects.

- Hybrid model of AI automation + human intelligence.

- Data security & compliance with GDPR, HIPAA, and global standards.

- Customized solutions tailored to your platform needs.

- Dedicated multilingual teams for worldwide audience moderation.

Benefits of Outsourcing Content Moderation To Us

By partnering with Vaidik AI

⏩ 24/7 content monitoring services with global coverage.

⏩ Cost-effective content moderation outsourcing solutions.

⏩ Multilingual moderation for global platforms.

⏩ Scalable moderation support during high-traffic events.

⏩ Customizable workflows and flexible engagement models.

Contact US

Get Started with Content Moderation Services

Ensure a safe, engaging, and trustworthy digital experience for your users. Partner with Vaidik AI for reliable, scalable, and cost-effective content moderation services.

Contact us today to learn more about our solutions and request a free consultation.

Frequently Asked Questions

Content moderation services involve the monitoring, reviewing, and filtering of user-generated content (UGC) such as text, images, videos, and comments. These services ensure that all published content complies with platform policies, legal regulations, and community standards.

The three main types of moderation typically include:

Pre-Moderation – Content is reviewed before it goes live to ensure compliance with rules and guidelines.

Post-Moderation – Content is published immediately, then reviewed after posting for violations.

Reactive Moderation – Users flag inappropriate content, which is then reviewed by moderators.

Many modern platforms also use automated moderation (AI tools) alongside these traditional types for better speed and scalability.

Content moderation faces several significant challenges, including:

High volume of content being uploaded every second.

Evolving harmful content tactics (e.g., hidden hate speech, coded language).

Balancing freedom of expression vs. community safety.

Emotional toll on human moderators, who often review disturbing material.

Cultural and linguistic differences, making global moderation complex.

Maintaining accuracy while moderating content quickly.

These challenges are why many organizations choose hybrid moderation solutions combining AI technology with human expertise to achieve scale, speed, and accuracy.

There are generally four types of content moderators, each with specific roles:

AI or Automated Moderators – Algorithms that detect and flag harmful content at scale.

In-House Moderators – Employees who work directly for the company to review content.

Outsourced Moderators – Third-party teams hired to handle moderation for large volumes of content.

Community Moderators – Trusted users or volunteers who help monitor platforms, often seen in forums and gaming communities.